Training AI with culture

How is culture relevant to the training of artificial intelligence?

A guess about the origin of human level intelligence is that it was mutually arising with complex society and culture, that is, with the origin and evolution of memes (Dawkins). Culture is composed of memes which are replicated by the two step process of being (1) recreated within a mind and then (2) enacted as some observable trait; creativity being necessary to reverse engineer the latent content of a meme, its meaning, in or order to accurately output the observable trait (Deutsch).

A human using creativity serves memes as a kind of “meme machine” by making high fidelity copies of cultural artifacts or traits such as manners, rituals, totems, and tools (Blackmore).

The complexity of cultural artifacts has grown exponentially from stone tools to space telescopes. Culture is a deep library of memes carrying objective knowledge. That knowledge contains within it truth about the world, while also being riddled with errors (Popper). Knowledge is information with causal power so that what truth it contains causes itself to survive in its environment.

Humans good at copying cultural artifacts must have risen in stature in their society. This would have put further evolutionary pressure on creativity even though it was hardly ever used, until within the last 300 years, to actually innovate. For most of human history there was no observable improvement within a human’s lifetime even though they were intelligent, creative, had language and culture. Humans copied existing artifacts, as faithfully as they could, to earn the prestige of society (Deutsch). These incentives drove both the acquisition of intelligence but also the disabling of it for anything but replication of the cultural meme pool. Only until the evolution of rational memes, which among others included the idea to actually innovate, did a jump in meme complexity occur, and objective knowledge grew exponentially.

Given the above, intelligence is the efficiency of recreating the latent content of previously unknown memes and enacting their cultural traits. This loosely follows Chollet’s definition:

Intelligence is the efficiency with which a learning system turns experience and priors into skill at previously unknown tasks. (Chollet)

Another way to put this is that an intelligent agent can identify and select a previously unknown cultural artifact, guess the latent content that was used to create it, and output a functional replica of that artifact.

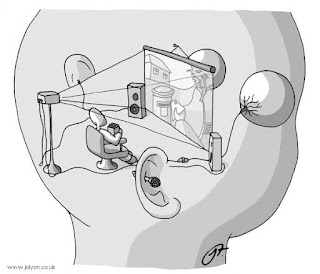

What is the latent content of this cultural artifact?

Culture serves up a deep library of novel artifacts as a training set, and the agent, observing an artifact, guesses its meaning, and in the appropriate context outputs a suitable replica. In that way the agent builds a library of skills, from which it can tackle tougher, more complex artifacts.

A key difficulty with programming intelligence has been that there is little we can specify about what it is that must be created. We can’t specify the knowledge that is to be created without giving away the answer (Deutsch, 2012). Once we have the answer, then applying it turns into skill. Intelligence is fundamentally about closing a knowledge gap, whereas skill is about closing a performance gap.

High skill is not the same as high intelligence. Yet intelligence builds on skill to perceive and manipulate the world.

In order to explain and program intelligence, we must be explicit about where the knowledge exists and the role it has. Intelligence helps complete a task where the agent doesn’t yet have some necessary knowledge when it begins. It is presented an artifact that is has not seen before and which contains some latent knowledge it does not yet have.

A key property of the artifact, if we are to observe intelligence at work, is that there must exist knowledge critical to forming or using the artifact that cannot be read from the artifact itself.

Most cultural artifacts or traits contain objective knowledge. The artifact can be thought of as the output of some program. The logical depth of an artifact can be measured by the shortest program that outputs the artifact given random inputs (Bennett). More challenging artifacts for an intelligent agent will tend to have greater logical depth.

An agent given an artifact needs to guess how to replicate it. That is, it needs to guess a suitable program that would output the artifact. The program is not readable from the artifact itself. It needs to be created from whatever prior knowledge the agent has and from its observations of the artifact. The agent can safely assume the knowledge must exist to output the artifact since the artifact is an existence proof of the program that output it.

The path to training an AI with fluid intelligence must include culture, a deep library of artifacts that contain latent knowledge, that the agent must learn to replicate. Or to turn it around, we might look at the training set for intelligent agents as the emerging and evolving culture for an AI.

That culture would need to be vast, with varying levels of difficulty to support the needs of a low skill learner starting with basics and progressing to advanced material. Because of the difficulty in creating such an edifice, it would be our culture that the AI learns to be intelligent by. Because our culture includes ideas such as innovation and a complex of other rational memes, our culture would be sufficient for an AI to learn to be truly creative and original.

REFERENCES

Bennett, Charles, Logical Depth and Physical Complexity

Blackmore, Susan, Meme Machine

Chollet, Francois 2019, On the Measure of Intelligence

Dawkins, Richard, The Selfish Gene

Deutsch, David, The Beginning of Infinity

Deutsch, David, 2012, How close are to we creating artificial intelligence, https://aeon.co/essays/how-close-are-we-to-creating-artificial-intelligence

Legg, Hutter 2007, Universal Intelligence: A Definition of Machine Intelligence

Popper, Karl, Objective Knowledge

Comments